Are You a Horse?

A new twist on this old comparison gives you a better way to evaluate AI’s impact on your job

Do you believe AI will create more jobs? It probably will. Just not for you, or for me, or for most people reading this.

But what really defines if you will be replaced?

TL;DR: There’s a SKILL.md doc at the bottom of the page that you can use as a different way to explore this article.

The Horse Analogy Isn’t New

It was presented as a way to think about AI and jobs decades ago, but the conversation was only just starting back then. What follows builds on that work, but aims to give it more tangible structure.

Erik Brynjolfsson and Andrew McAfee popularised it in The Second Machine Age in 2014, then in the papers and articles that followed it. CGP Grey’s video Humans Need Not Apply, also from 2014, brought the same basic idea to a wider audience.

They both say this:

Horses had one big advantage, which was muscle power.

They lost that advantage when the car showed up.

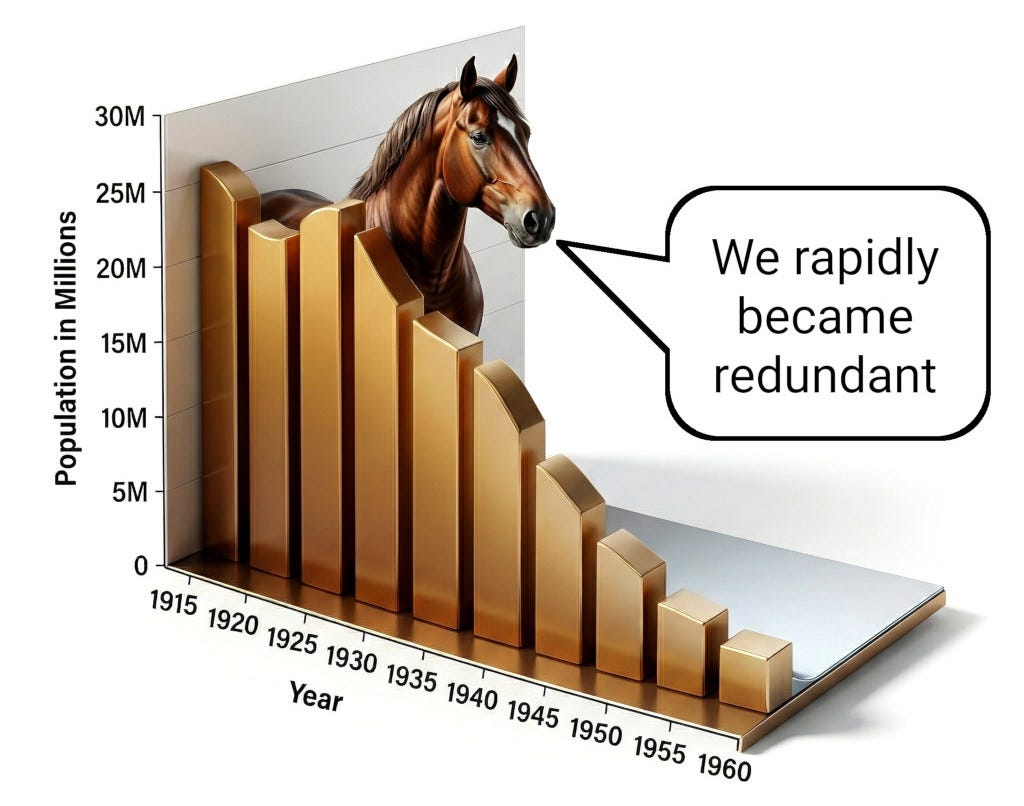

The horse population in the United States went from 26 million in 1915 down to 3 million by mid-century. The question they both ask is whether the same thing might happen to humans now that AI is taking over our cognitive work.

They borrowed this analogy from the economist Wassily Leontief, who used it back in 1982. Leontief had already noted the most important difference between us and horses: Horses don’t vote. In clear contrast, humans do, which means we have political paths that can protect us. This is the closest any of the earlier versions get to a structural argument, and of course it is true.

Then in 2025, Maxwell Tabarrok wrote a piece on his Substack that is the strongest pushback I’ve seen against the horse analogy. He makes three detailed arguments for why humans won’t end up like horses. I’ll come back to these in detail later.

What I want to add to this conversation now is quite specific. All of these versions used horses as a comparison - an effective way to make their argument feel real. But I think this comparison should go further than any of them take it. It works as a real structural claim about how economies work, and how value is created. But first, I want to paint a more up-to-date picture using a new perspective.

Mark Pesce’s Post-Watershed Tokenomics

Recently Mark Pesce shared his tokenomics framework, (AI tokens not crypto) and that’s where our argument starts. If you haven’t read it, stop here and go read it. It’s great.

TL;DR The framework lays out three pieces. Infrastructure mints tokens - the units of cognition that come out of datacentres, GPUs, and all the systems running on top of them. Harnesses spend those tokens - the tools, agents, workflows and humans that put this cognition to use. Alpha is the value left over once you account for what you spent on the tokens. This is the thing that decides who comes out ahead and who doesn’t. And Mark’s main point is that alpha can’t be cognitive. If the thing you’re betting on is just more thinking, then you’re betting on something that’s being mass-produced at near-zero cost.

Mark built a framework that’s useful for business. A way for organisations to think about competitive advantage as cognition becomes a commodity - what does your company have that the competition’s tokens can’t buy? That’s a tangible and useful framework, and his answers there are sharp.

But I want to take the same question and turn it inwards. Not “what does my company have”, but “what do I have?” Because the same logic that’s deciding which businesses win and lose is also deciding which people stay economically relevant. When our cognitive work loses its market value, what’s left of the value we each hold as individuals?

Not the stock standard AI-displacement question “will my job get automated?”, that one’s too easy to either panic about, or just dismiss. The harder version is one layer down:

What do I have that tokens can’t buy?

Lets apply the same words Mark uses, but focus on asking them personally.

Asked that way, it doesn’t let you off the hook with any comfortable, easy answers. Your expertise is cognition. Your taste is cognition. Years of accumulated experience are baked-in cognition. All of this cognitive work can be replaced with tokens over time. All of them get cheaper as the cost of producing the same output drops. And none of them are the kind of thing you can hold onto as an asset to retain value.

Mark is right about the question. But he’s not quite right about the metaphor.

From Currency To Fuel

Mark’s framework calls tokens a currency. I don’t think that’s quite right.

Currencies can be circulated. You can save them to store value over time. Tokens don’t do any of that.

A better fitting metaphor may be fuel, and the combustion it unlocks.

Tokens get created and consumed at the same instant - like the heat from a fire, combustion in an engine, or the electricity flowing through a wire. You can’t stockpile tokens. There’s no token inventory and no token wealth. The only thing in this system you really can stockpile is the infrastructure or capacity to produce tokens: GPUs, datacentres, energy contracts, model weights.

So the currency metaphor breaks down, but it points us in a very useful direction. Towards the framework of energy economics.

Infrastructure (chips, datacentres, power grids and model weights) is the storable part of the system. It’s what you can build, own and accumulate. This is the only place capital can really build up. By contrast, tokens are the fuel the infrastructure produces and the engines consume - all in the same instant. Harnesses are the engines. The work these engines produce is the output. And alpha is what’s left over after you pay the cost of all your inputs.

Mark’s three pieces are still intact. But this energy frame is closer to what’s really happening. This fuel metaphor is useful and it makes the horse story clearly relevant.

But if you push on that metaphor, then it struggles too.

From Fuel To Units Of Work

Pushing past the fuel metaphor doesn’t lead to another metaphor. It leads to a literal description.

Real fuel (oil, coal and gas) is a substance. You can extract it from the ground, store it in physical tanks and then ship it across oceans. Then you can burn it later. There’s a delay between the production and consumption. You can hold it as inventory.

Tokens are not like that. Nothing about tokens gets extracted, stored, or shipped. The “fuel” in this metaphor magically comes into existence at the moment the engine runs, then vanishes in the very same instant. If we push this metaphor one step further then the substance just dissolves.

I think the most honest version is this:

Tokens aren’t really a substance at all. They’re an accounting unit.

Closer to a meter reading than a fuel.

The kilowatt-hours (kWh) on your electricity bill measure work flowing through your meter. It’s just accounting. It’s not some literal energy sitting in a vault somewhere. Yes, kWh energy can be stored in batteries and dams, but the kWh on your bill aren’t those kWh. They’re just a count of what passed through the meter when you used it. Tokens work in the same way. There is no token vault. There’s just some compute capacity that does cognitive work, and then a count of how much it did - measured in tokens.

Now we’ve refined the framework one more level. The real physical fuel powering the system is electricity. The real engine is GPUs running model weights. The actual work output is cognitive labour, and that’s measured in tokens. The only storable layer in the entire system is the production capacity itself: chips, datacentres, model weights, energy contracts. Everything downstream of that capacity is ephemeral.

Where All Three Lead

This gives us three framings, three different ways to see tokens. Currency, fuel and accounting unit. The first two are metaphors. The third isn’t a metaphor at all - it’s the literal description of what tokens actually are. Each is a better fit than the last, and the last is most accurate because it’s not really a fit, it’s the thing itself.

And here’s what really matters:

The two metaphors and the literal description all predict the same answer about where the value lives.

The currency view predicts alpha lives wherever the currency is issued. The fuel view predicts it lives in the extraction and engine ownership - those who own the production capacity capture the value when fuel cheapens and engines are commoditised. And the unit-of-work view predicts the value lives in the production capacity itself. At the points where the measured work output finally translates into physical action.

All three of these views point at the same set of real-world things: chips, datacentres, energy contracts, model weights, regulatory positions and the interfaces where cognitive work crosses over into physical reality.

The underlying claim each view is pointing at is structural.

Storable things accumulate value. Ephemeral things don’t.

Cognitive work itself is ephemeral. The artefacts it produces - code, text, images, videos - can persist, but the value of those artefacts commoditises toward the cost of regenerating them, which is heading toward zero. What stops the commoditisation isn’t the cognition. It’s something non-cognitive wrapped around the cognition - brand, audience, IP, legal weight, physical instantiation. Alpha can live in those wrappers. It cannot live in the cognition itself.

Now if we ask the three questions again:

What do I have that tokens can’t buy?

What do I have that can’t be produced by burning fuel?

What do I have that’s storable, and not cognitive?

We can see that they all point at the same answer.

The Horse Analogy As Structure

With the new view we just built, it’s now clear that the horse comparison isn’t merely rhetorical anymore. It’s a literal, structural claim.

All the earlier versions of this analogy treated horses as a general “something” that had been replaced, but they didn’t get specific about what “type” of something it was. Now we can be more precise. Horses weren’t workers. And they weren’t labour. They were the general-purpose engine of our pre-mechanical economy. They turned feed into useful work in the form of hauling, ploughing, transport and military movement. Until the early twentieth century, most of the world had no other engine that could do this many different things.

When the internal combustion engine showed up, it was simply a better engine for the same job. It was cheaper per unit of work, it was more scalable, it didn’t get tired and it kept getting better every year. And this led to the horse population of the United States plummeting from 26 million in 1915 to 3 million by 1960. It wasn’t because horses got worse - their capability didn’t change at all. It was because a better engine had arrived. Some niches did survive - recreation, ceremony, companionship, search and rescue. The kinds of things where horses still made sense, for reasons that weren’t really about economics. But the rest of the horse population did not move into new horse jobs. Because there weren’t any.

Now it’s our turn. Humans have been the unique engine for doing cognitive work. The general-purpose solution for taking inputs and turning them into useful thinking across an enormous range of jobs. Our modern economy was built on the assumption that humans were the only available cognitive engine.

Now a new cognitive engine has arrived. It’s cheaper per unit of work, it’s more scalable, it doesn’t get tired, and keeps getting better every year, month and week. Here we can clearly see all the conditions that impacted horses, and now they are impacting humans.

The horse case is no longer a rough comparison for what’s happening right now. It’s the exact same process happening again. Same role in the system. Same kind of replacement. And there is no good reason to expect a different outcome from the same setup.

The Strongest Counterarguments

Now we can revisit Tabarrok’s piece, because it has the three best pushbacks against the horse analogy.

Tabarrok says humans can adopt technology, but horses can’t. Farmers adopted tractors and stayed productive, so humans should be able to adopt AI and stay relevant. But the technology being replaced doesn’t adopt the replacement technology. It’s true that humans are unique in that it’s at least logically possible - you can use AI as a tool. But it doesn’t hold up economically. Why pay for a human plus AI when AI alone does the job cheaper? The human’s share of that pairing shrinks every time the models improve. It’s a feature of the transition, not a defence against it.

Tabarrok also argues that humans and AI aren’t perfect substitutes. Engines outperformed horses at all of their tasks, and AI doesn’t have that kind of “across the board” superiority over humans. Since AI only marginally outperforms in some domains, humans retain value through specialisation.

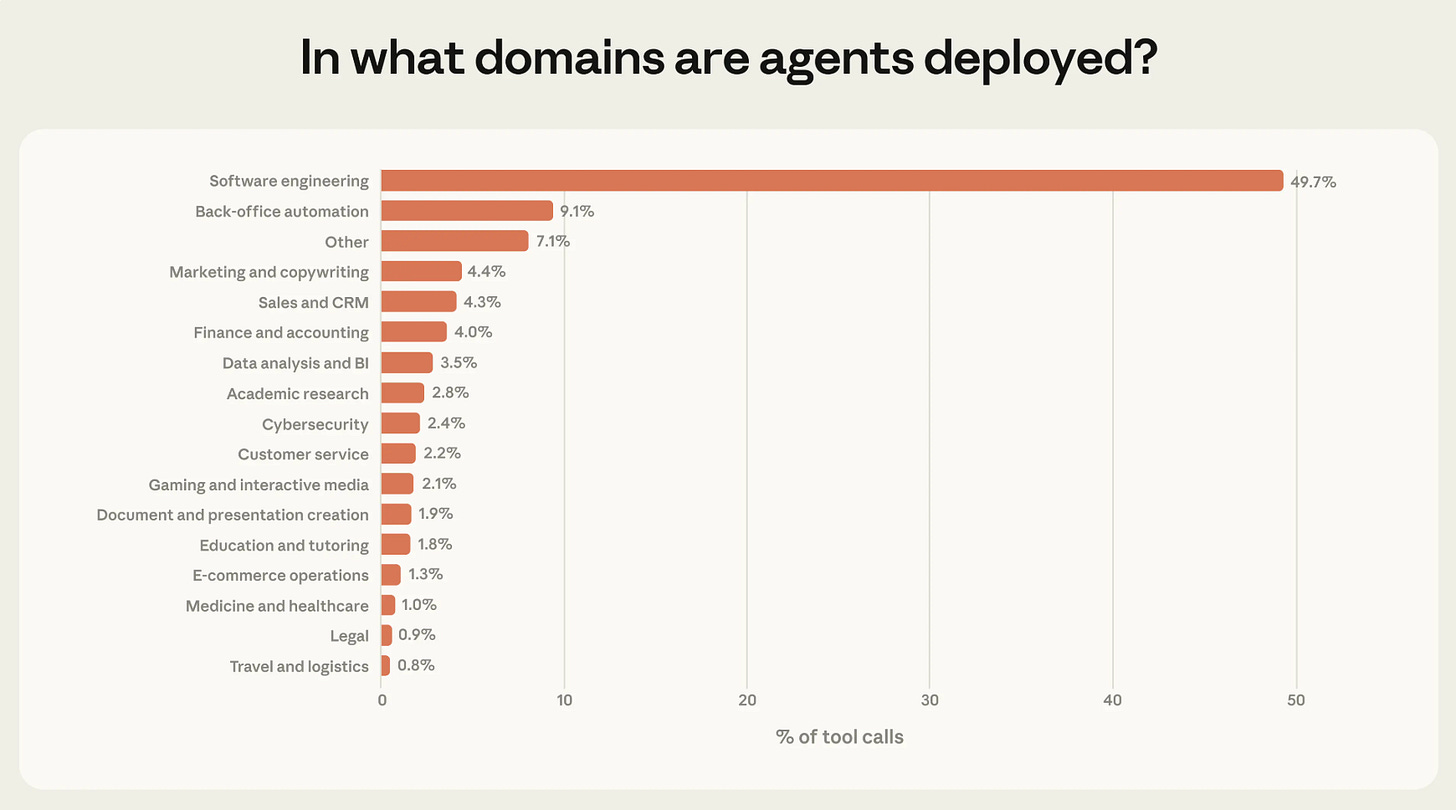

But “perfect substitution” is a bar that has never actually been cleared in any real-world replacement - including the one that ended the horse economy. Horses and engines weren’t perfect substitutes either. What actually matters is whether the substitute is “good enough” across enough tasks that the remaining niches can’t sustain the original population. Rich Sutton’s Bitter Lesson makes this point about AI directly - general methods that scale with compute always beat the precise, handcrafted distinctions we thought mattered. The gaps between human and AI capability today are exactly those kinds of distinctions. They dissolve under more compute. Software engineers are just the canary in this modern coal mine.

Then there’s the ownership argument, and it is possibly the most well-known:

Humans own AI.

The productivity gains flow to capital, capital is owned by people, and those people keep buying things made by other people. Horses didn’t own anything. Humans own everything. So our analogy should fail at the ownership level.

The problem is:

This is true on average, but false at the individual level.

And it’s the individual level that matters most when you’re trying to figure out what’s going to happen to your own career.

The average human may mathematically own some slice of AI infrastructure. But the actual, typical human owns exactly zero. The income that flows to “human owners of AI” only flows to a minuscule fraction of the global population. The people who already own real equity in compute, energy, and the frontier model companies. Everyone else gets nothing from these productivity gains.

This argument also assumes that humans will keep wanting whatever is being bought and sold. But they might not. If the primary economic activity becomes agents trading with other agents, in things that agents need, then the market for human-stuff becomes a leftover. This is not the centre of the economy, it’s barely even the periphery. The “humans own AI” reassurance assumes that the system will still need human customers. But it doesn’t have to.

The Updated Question

After all this, Mark’s core question still stands. But the refined version of it is the one we’ve been building toward is perfect for applying to ourselves as individuals:

What do I have that infinite tokens couldn’t reproduce?

The original version let you cling to “expertise” or “judgement”, but we’ve already seen those are just tokens. This final version forces the answer to honestly come from outside cognition entirely. All three views point at the same answer.

What can’t be reproduced by infinite tokens? Physical assets. Land. Energy production. Compute infrastructure. Regulatory positions. Trust that’s built up between specific humans over years of sharing time and space. Even digital artefacts can hold value, but only if something non-cognitive is wrapped around them - brand, audience, IP, legal weight. The cognition itself, stripped of the wrapper, is reproducible. The wrapper isn’t.

So, are you a horse?

The question lands differently when you’ve followed the argument all the way down. This isn’t a provocation anymore. It’s a real and serious question, that can be given a real answer. The honest answer for most people reading this is yes. You’re a general-purpose cognitive engine being slowly replaced by a cheaper engine, and it does the same job better.

This diagnosis is harsh, but being honest about this is the only way we can start any useful work. That’s what I’m diving into next here on Flux. In the meantime, I’d love to hear where you land on the question: What do you have that infinite tokens couldn’t reproduce?

Try This Skill

To make this important topic more accessible I’ve created a Skill that you can use with your favourite AI in 3 easy steps. Here’s how:

Download the SKILL.md file

Upload it to your AI

Then just say “Run this skill”

Flux ‘Are You A Horse?’ Explorer (download)— Explore and debate the ideas in this article with an AI that knows the arguments. Get more details about any specific point, or challenge the ideas and test the boundaries as you form your own opinion.

Note: It’s important to download this SKILL.md file, then upload it directly to your AI rather than just giving it the web link . We also recommend that you use one of the leading models from the 3 frontier labs (e.g. Claude, Gemini or ChatGPT). If you use a lower level model then your results may vary. And of course if you find any issues, or feel like you’ve found a real flaw in my arguments please let me know — I love constructive feedback and real debate.